Gallery Photos

PySpark 4.0 Tutorial For Beginners with Examples - Spark By Examples

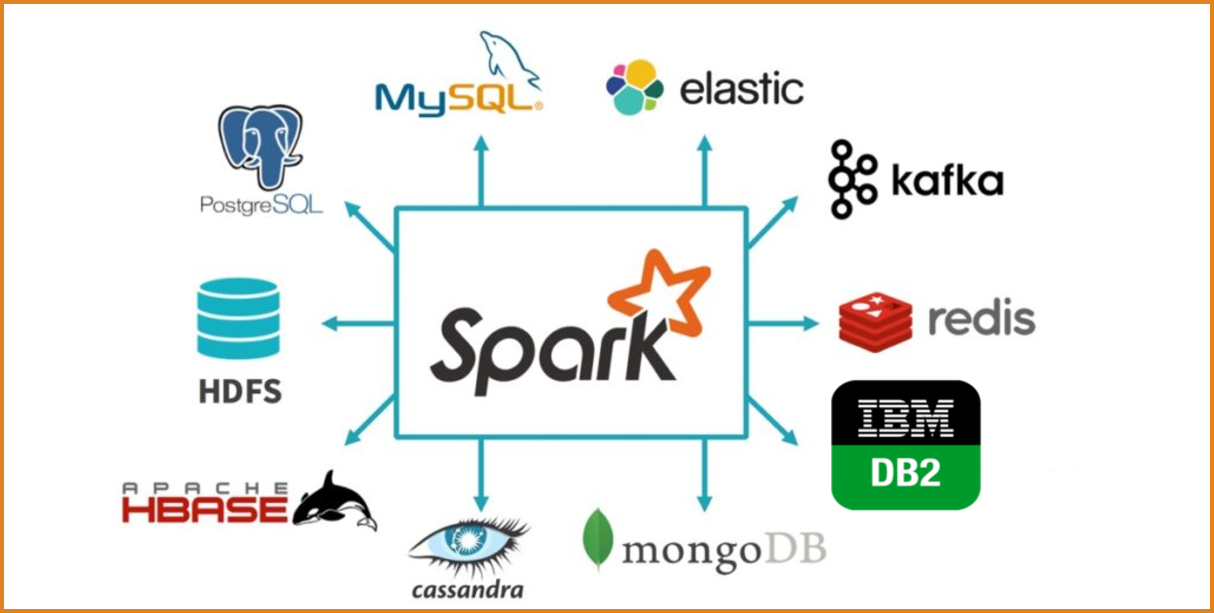

PySpark Tutorial: PySpark is a powerful open-sourceframeworkbuilt on ApacheSpark, designed to simplify and accelerate large-scale data processing and analytics tasks. It offers a high-level API forPythonprogramming language, enabling seamless integration with existingPythonecosystems.

How to Learn PySpark From Scratch in 2026 | DataCamp

Nov 24, 2024What Is PySpark? PySpark is the combination of two powerful technologies:Pythonand ApacheSpark.Pythonis one the most used programming languages in software development, particularly for data science and machinelearning, mainly due to its easy-to-use and straightforward syntax. On the other hand, ApacheSparkis aframeworkthat can handle large amounts of unstructured data.Sparkwas ...

PySpark Tutorial - GeeksforGeeks

Jul 18, 2025PySpark is thePythonAPI for ApacheSpark, designed for big data processing and analytics. It letsPythondevelopers useSpark'spowerful distributed computing to efficiently process large datasets across clusters. It is widely used in data analysis, machinelearningand real-time processing.

PySpark Overview — PySpark 4.1.1 documentation - Apache Spark

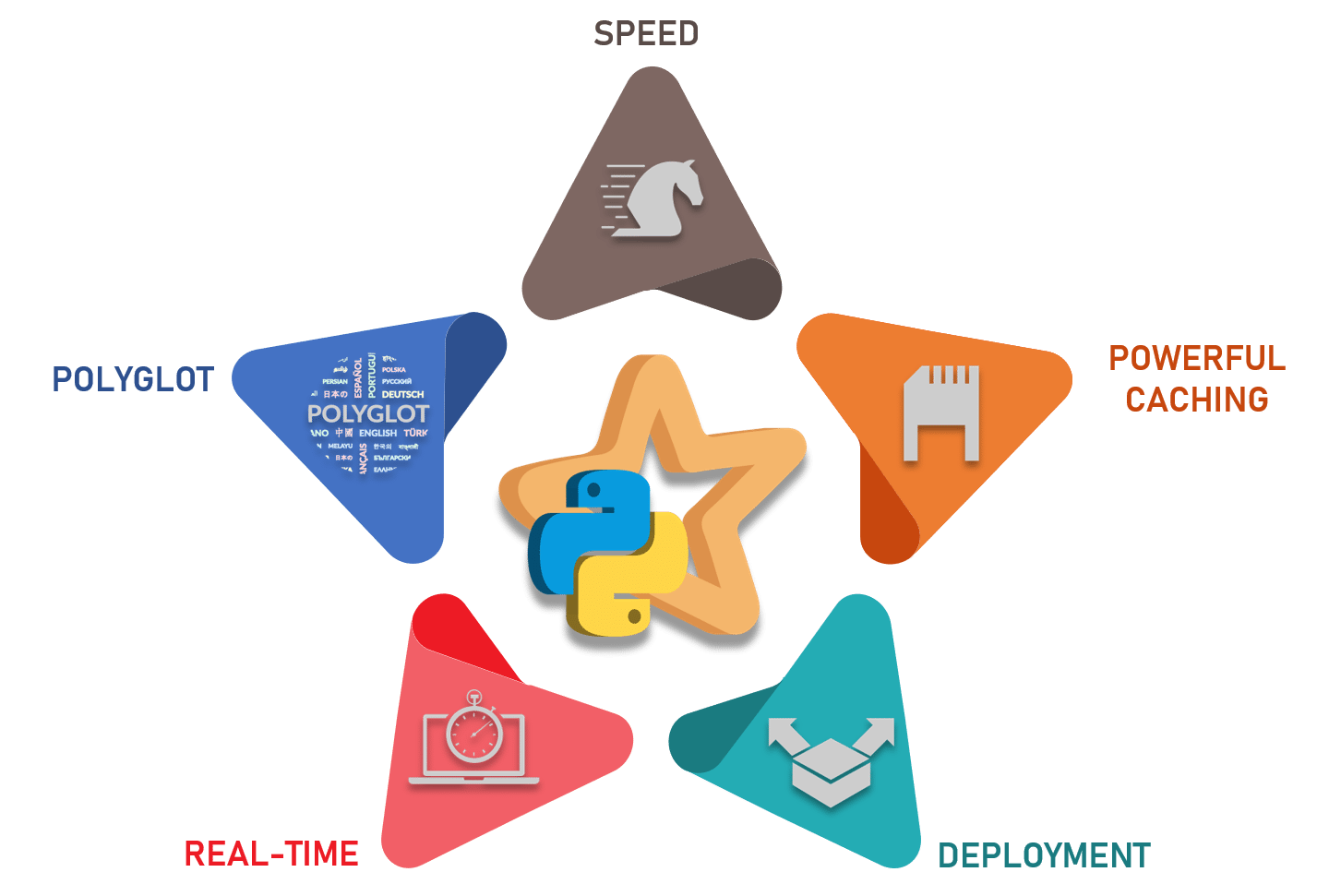

Jan 2, 2026PySpark combinesPython'slearnability and ease of use with the power of ApacheSparkto enable processing and analysis of data at any size for everyone familiar withPython. PySpark supports all ofSpark'sfeatures such asSparkSQL, DataFrames, Structured Streaming, MachineLearning(MLlib), Pipelines andSparkCore.

Pyspark Tutorials - Pyspark

PySpark is thePythonAPI for ApacheSpark, an open-sourceframeworkdesigned for distributed data processing at scale. With its powerful capabilities andPython'ssimplicity, PySpark has become a go-to tool for big data processing, real-time analytics, and machinelearning.

Apache Spark with Python 101: Quick start to PySpark (2026)

Hands-on guide to ApacheSparkwithPython(PySpark). Learn ...

PySpark Tutorial for Beginners: Key Data Engineering Practices

Jul 22, 2024PySpark combinesPython'ssimplicity with ApacheSpark'spowerful data processing capabilities. This tutorial, presented by DE Academy, explores the practical aspects of PySpark, making it an accessible and invaluable tool for aspiring data engineers. The focus is on the practical implementation of PySpark in real-world scenarios. Learn how to use PySpark's robust features for data ...

Introduction to PySpark: A Comprehensive Guide for Beginners

What is PySpark? PySpark is thePythonAPI for ApacheSpark, an open-sourceframeworkdesigned for big data processing and analytics. Originating from UC Berkeley's AMPLab and now thriving under the Apache Software Foundation,Sparkhas become a cornerstone of data engineering worldwide. PySpark brings this power toPythonusers, eliminating the need to learn Scala or Java—Spark's native ...

GitHub - alexandrarrdg/pyspark-learning: A comprehensive, hands-on ...

A comprehensive, hands-onlearningpath for mastering ApacheSparkwithPython. This repository contains 8 interactive Jupyter notebooks that take you from PySpark fundamentals to advanced topics like machinelearningand recommendation systems.

PySpark for Beginners - How to Process Data with Apache Spark & Python

Jun 26, 2024What is Pyspark? PySpark is thePythonAPI for ApacheSpark, a big data processingframework.Sparkis designed to handle large-scale data processing and machinelearningtasks. With PySpark, you can writeSparkapplications usingPython. One of the main reasons to use PySpark is its speed.

Learn Data Science and AI Online | DataCamp

Learn Data Science & AI from the comfort of your browser, at your own pace with DataCamp's video tutorials & coding challenges on R,Python, Statistics & more.

Apache SparkTM - Unified Engine for large-scale data analytics

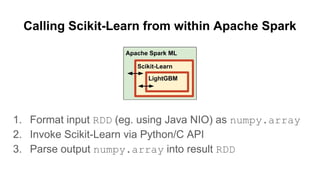

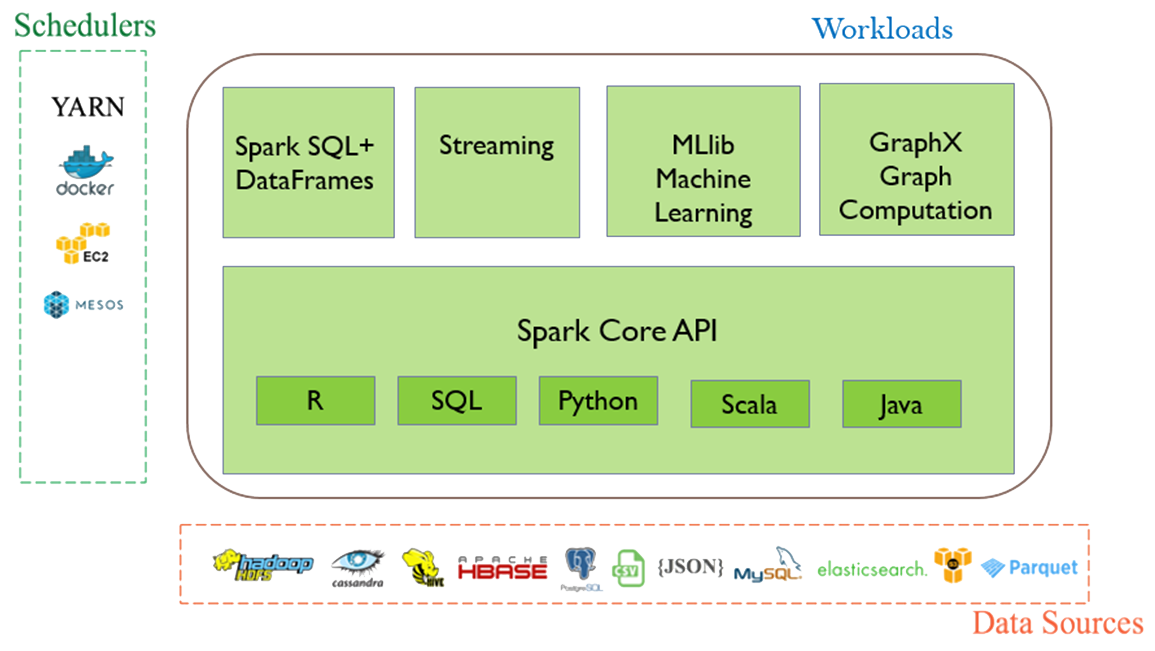

ApacheSparkis a multi-language engine for executing data engineering, data science, and machinelearningon single-node machines or clusters.

Overview - Spark 4.1.1 Documentation

ApacheSparkis a unified analytics engine for large-scale data processing. It provides high-level APIs in Java, Scala,Pythonand R, and an optimized engine that supports general execution graphs. It also supports a rich set of higher-level tools includingSparkSQL for SQL and structured data processing, pandas API onSparkfor pandas workloads, MLlib for machinelearning, GraphX for graph ...

GitHub - dmlc/xgboost: Scalable, Portable and Distributed Gradient ...

XGBoost is an optimized distributed gradient boosting library designed to be highly efficient, flexible and portable. It implements machinelearningalgorithms under the Gradient Boostingframework. XGBoost provides a parallel tree boosting (also known as GBDT, GBM) that solve many data science problems in a fast and accurate way.

GitHub - charanneelam123-dot/data-quality-monitoring-framework

TodayReusable, pipeline-agnostic data qualityframeworkbuilt on PySpark. Plug into any Databricks notebook, AWS Glue job, or dbt post-hook. All thresholds are driven by YAML config — zero hardcoded values.

MLflow - Open Source AI Platform for Agents, LLMs & Models

The largest open source AI engineering platform for agents, LLMs, and ML models. Debug, evaluate, monitor, and optimize your AI applications. Built for teams of all sizes.

Databricks: Leading Data and AI Platform for Enterprises

Databricks offers a unified platform for data, analytics and AI. Build better AI with a data-centric approach. Simplify ETL, data warehousing, governance and AI on the Data Intelligence Platform.

Spark ETL Framework: ETL Patterns Guide - DEV Community

3 days agoETL Patterns Guide —SparkETLFrameworkA practical guide to building reliable, scalable... Tagged withspark, dataengineering, etl,python.